This week, we share news of API vulnerabilities affecting Avelo Airlines, WhatsApp, and Oracle, and an incident notification from OpenAI to API developers about potential information exposure. We also highlight a new survey from F5 on the role of API security in agentic AI systems. And to wrap up, we have an article examining the risks from AI-generated software and what API teams need to know.

Vulnerability: Massive API Data Exposure at Avelo Airlines

Security researcher Alex Schapiro reveals API flaws he found in Avelo Airlines’ reservation system that exposed millions of passenger records, including PII and payment-related data.

The main API vulnerability in this case was a broken authentication check. The API accepted a 6-character reservation code without also requiring the passenger’s last name. This allowed anyone with just a valid code to retrieve the corresponding record, without having to guess the relevant passenger’s name. To make matters worse, the API was “very generous” with the passenger data it returned.

The API also lacked rate-limiting controls, which allowed for brute force enumeration of all possible reservation codes, and by consequence, passenger records. The researcher included an eye-opening estimate of the cost to brute force all combinations, calculated at just $435 using AWS Lambda.

Broken authentication and excessive data exposure are serious API vulnerabilities on their own. But when combined with missing rate limits, the impact can really escalate, allowing sensitive data to be harvested at massive scale.

These are important API security controls to get right. Read the report.

Vulnerability: 3.5 Billion WhatsApp Accounts Exposed

Continuing on the topic of API rate limiting, researchers have shared a detailed report describing how they were able to exfiltrate 3.5 billion WhatsApp user phone numbers through a vulnerable API.

This is another API enumeration attack, though different from the Avelo Airlines case, because in this instance, the API behaved as intended. Social media platforms often provide lookup services that allow users to find other accounts and search for friends. But that search function can also be abused for large-scale enumeration attacks. Beyond social media, we’ve seen similar cases before, including the Trello incident that exposed the information of over 15 million users, again through a vulnerable search API.

The original research highlights the challenge of allowing legitimate users to perform a high volume of lookups while at the same time preventing abuse and exfiltration of massive data sets. The solution requires some sophistication to distinguish normal from abnormal user behavior.

Part of the challenge is also that the value of these attacks is not necessarily in the data retrieved about any individual user, but more in the large volume of data. At scale, the data becomes highly valuable for use in automated phishing attacks or fraud. So these incidents demonstrate the need for carefully considered API rate-limiting strategies that balance intended user experience with the risks of API enumeration vulnerabilities. Read the article.

Incident: Risks from OpenAI’s API Developer Platform

A quick note on something that popped up in my inbox today. OpenAI is notifying developers about a security incident that may have exposed private information for users of the OpenAI API development platform.

platform(dot)openai(dot)com

The issue appears to stem from a third-party vendor, Mixpanel, which OpenAI uses on the frontend of its API development site for analytics. OpenAI hasn’t shared details on the technical cause yet, but the incident report advises developers to stay alert for credible-looking phishing attempts or unusual emails.

If you’ve done any API development or testing through OpenAI’s platform, this is one to keep an eye on. Read the incident report.

Vulnerability: API Routing Flaws in Oracle Identity Manager

While most API endpoints require authentication, some endpoints are deliberately excluded from this step. Skipping authentication is a common source of API vulnerabilities, and we see similar patterns in many previously reported cases.

A recent example involves Oracle’s Identity Manager (OIM). In OIM, authentication is skipped for requests ending with the query string “?WSDL”. This is typically a request for a publicly available SOAP service description file, so skipping authentication in this case makes sense.

However, a common coding mistake made the WSDL check too loose. Developers often use risky pattern checks such as “ends with” or “contains”, which are easily exploited. An attacker can just tack on “?WSDL” to the end of any API request, tricking the API code into treating it as a public WSDL request and so bypassing authentication entirely.

API authentication bypasses like this are common, so this is another vulnerability to watch out for. Read the report.

Survey: Impact of API Vulnerabilities for AI Enablement

F5 has published a new survey report, “2025 Strategic Imperatives: Securing APIs for the Age of Agentic AI in APAC”. The survey looks at API security trends across organizations in the Asia Pacific region and the impact of API vulnerabilities on AI enablement strategies.

“APIs are now central to digital transformation, partner integration, and AI enablement in Asia Pacific”

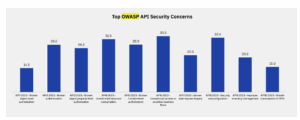

APIs are key enablers of AI integrations and autonomous workflows. The report highlights how many OWASP API Top 10 API vulnerabilities align with organizational concerns around AI, as autonomous agents can amplify the impact of issues like API6:2023 “Unrestricted access to sensitive business flows” and also API4:2023 “Unrestricted resource consumption”.

2025 Strategic Imperatives: Securing APIs for the Age of Agentic AI in APAC

The survey also found that API authentication and authorization remain the weakest links, with many teams struggling to find an effective solution for vulnerabilities such as API1 BOLA and API2 Broken Authentication.

Undocumented and unmanaged APIs are another major concern, with post-production techniques like code scanning and traffic-based detection proving inadequate, according to a majority of survey participants. Non-deterministic AI agents are likely to exacerbate these risks by automatically uncovering and exposing undocumented APIs.

This raises the urgency for API teams to address the root cause by adopting a pre-production approach: maintaining a fully documented, tracked and enforceable API inventory. Read the survey.

Article: Deterministic Guardrails for AI-Generated Code

Security has long played “second fiddle” to feature delivery, especially in API development, where new services and updates are pushed out at a breakneck pace with ever-shorter release cycles.

Now, with AI-generated code entering the mix, the question is: is the priority gap between security and time-to-market about to get better or a whole lot worse? That’s the question Ramya Murthy explores in a recent Medium article, and her conclusions are sobering.

“One in five organizations have already suffered material business damage from AI-generated code”

Murthy highlights a key limitation: AI often lacks context. It doesn’t understand an API’s business logic, regulatory requirements, or security policies. Instead, it generates code based on learned patterns, producing mixed results.

“45% of AI-generated code fails basic security checks”

From a security standpoint, AI can help teams to improve productivity through automation and rapid code generation. But developers can’t rely on AI alone. Rigorous security checks and thorough testing will be essential to ensure AI-generated code meets organizational security policies and regulatory standards for APIs. Read the article.

Get API Security news directly in your Inbox.

By clicking Subscribe you agree to our Data Policy